The Healthcare AI Policy Moment: What We Must Get Right

The Policy Moment

Through the Health Sector AI Request for Information, the U.S. Department of Health and Human Services signaled that AI adoption in clinical care is now a federal priority. HHS is evaluating how regulation, reimbursement, governance, and interoperability policy can accelerate responsible deployment while maintaining safety and accountability. The decisions made now will shape how AI integrates into care delivery for the next decade.

Why AI, Why Now

The pressure driving healthcare AI adoption has two interconnected roots. The first is clinical: an aging population, rising chronic disease prevalence, and increasingly complex therapies are compounding demand on health systems at the same time that primary care and nursing shortages are limiting capacity. The second is financial: labor represents 50 to 70 percent of total operating cost for most health systems, and those costs are growing faster than revenue. These pressures are not independent—clinical complexity directly amplifies the labor cost crisis, and workforce shortages and reimbursement challenges make the financial pressure harder to absorb.

AI automation should be applied to the workflows where this combined pressure is most acute, such as revenue cycle, prior authorization, clinical documentation, scheduling, and utilization management. This is the mechanism by which health systems will be able to sustain both financial viability and clinical quality simultaneously. This is what we describe as better margins for stronger missions.

HHS should also be asking a question that is straightforward but consequential: what should clinical AI not do? It should not optimize for engagement over clinical outcomes. It should not serve as a channel for marketing and promoting of non-peered reviewed science in the guise of education. When general-purpose consumer AI platforms that derive revenue from advertising gain access to clinical environments, the structural conflict between commercial incentive and clinical integrity does not disappear because the interface looks clinical. Purpose-built healthcare AI is the architecture most likely to maintain that standard.

What We Told HHS

Our response addressed the specific questions HHS posed, on barriers to innovation, regulatory priorities, governance of non-device AI, interoperability, and where AI has and hasn't met expectations.

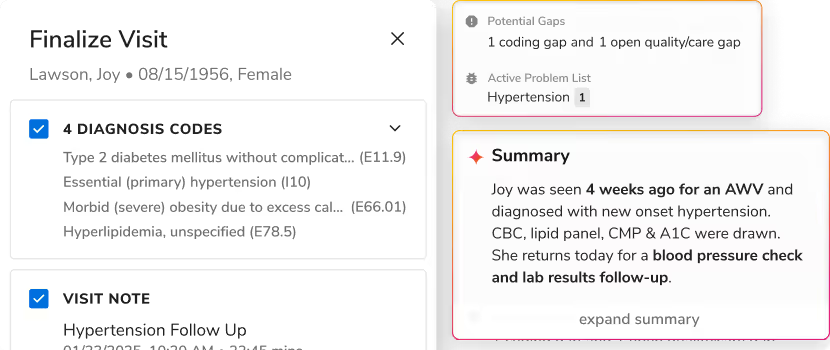

Barriers to innovation. We identified three primary obstacles. First, fragmented data and governance infrastructure: health systems attempting to adopt AI encounter data scattered across siloed EHR, billing, scheduling, and HR systems with no unified governance layer. Second, data access restrictions imposed by EHR vendors: restrictions on automated API-based data access, inconsistent FHIR implementations, and prohibitive fees prevent health systems from building the unified data foundation that operational AI requires. Third, workflow integration gaps: AI tools that require staff to switch to a separate system achieve minimal adoption regardless of technical quality.

Regulatory priorities. We recommended that HHS finalize and enforce HTI-5's information blocking provisions as prerequisite to the unified data access that operational AI requires, over all other immediate priorities.

Governance of non-device AI. We urged HHS to articulate governance principles rather than building a new federal compliance apparatus. Mandatory federal certification for non-device healthcare AI would create compliance overhead that disadvantages smaller developers, produce technical standards that become outdated faster than they can be revised, and add bureaucratic burden to an already heavily regulated sector. The appropriate federal role is to define the standard and provide the incentive.

Interoperability. AI is most powerful when it can reason across the full enterprise, such as clinical, financial, operational, and workforce data. However, current standards focus almost exclusively on clinical data exchange, leaving operational and financial data without standardized mechanisms. Inconsistent FHIR implementation and the near-universal absence of standardized write-back support prevent AI from completing workflows that require returning results to the EHR.

Where AI has and hasn't met expectations. AI that automates high-volume, rules-based administrative work is beginning to meet expectations. Prior authorization automation has produced measurable cost savings through fewer denials, lower labor cost per claim, and faster cash collection. Enterprise data intelligence, where teams can query across consolidated clinical, financial, and operational data, has replaced slow manual reporting cycles. The common success factor is integration: AI embedded in existing workflows achieves dramatically higher adoption than tools requiring workflow changes. Where AI has fallen short, the pattern is equally clear: fragmented, point-tool deployments without unified governance.

The Data Access Question Underneath the AI Question

Everything in our RFI response depends on a premise that not everyone involved in healthcare innovation shares: that data should flow freely, programmatically, and without unreasonable barriers to the AI systems health systems choose to deploy.

Incumbent health IT vendors have strong incentives to frame interoperability in ways that preserve their position as gatekeepers of health data infrastructure. When data access restrictions, prohibitive fees, inconsistent API implementations, and take-it-or-leave-it contractual terms are defended as protecting patient safety or intellectual property, it is worth asking who those structures actually protect. Safety and IP concerns are real, but they should be addressed through governance standards and accountability frameworks, not through mechanisms that allow the platforms storing health data to decide which AI tools get access, under what conditions, and at what price.

Alignment Will Determine the Outcome

Healthcare is entering a decade in which capacity, not innovation alone, will determine sustainability. Demand is rising, workforce supply is constrained, and financial pressure is persistent. AI will not solve those realities on its own—but deployed within the right infrastructure and governance framework, it can meaningfully expand the system's ability to deliver high-quality care without proportionally expanding cost.

The policy decisions being shaped now will determine whether AI becomes another layer of fragmentation or a unifying force across the healthcare enterprise. AI in healthcare needs alignment: between clinical goals and financial sustainability, between innovation and accountability, between federal standards and real-world implementation, and between the interoperability promises the industry has made and the data access it actually provides. If those elements come together, AI can move beyond isolated pilots and become durable infrastructure that strengthens both care delivery and the institutions that support it. Patients served by the broad healthcare ecosystem deserve nothing less.

.svg)

.png)

.png)

.avif)

.png)

.png)

.png)

.svg)

.svg)

.svg)