What Happens When Your Analytics Vendor Can Measure but Not Act?

The Medicare Advantage regulatory environment just changed faster than most payer analytics platforms were designed to handle. CMS expanded RADV audits from 60 plans to over 550 eligible MA contracts starting May 2025, scaling its audit workforce from 40 to 2,000 coders by September. Star Ratings methodology is being recalibrated mid-cycle. And federal estimates suggest MA plans may be overbilling by up to $43 billion annually — making every plan a target, not just outliers.

This is the environment in which your analytics platform needs to perform. Not "report." Perform. And for payer CEOs running on legacy analytics vendors, the question is no longer whether the platform delivers clean dashboards. It's whether clean dashboards are enough when CMS is auditing at machine speed and your competitors are closing gaps in real time.

The Measurement Trap: How Retrospective Analytics Became a Liability

For a decade, the payer analytics playbook was straightforward: aggregate claims data, run retrospective analyses, generate reports, hand them to operational teams, and hope the right interventions happened before the measurement year closed. Legacy analytics vendors built significant businesses on this model, serving hundreds of health plans with data drawn from billions of medical events.

That model worked when CMS audited a handful of plans per year and Star Ratings moved predictably. It does not work when RADV sample sizes jump from 35 to 200 records per audit, when the Health Equity Index reshapes Star calculations, and when CMS's LEAD model signals a shift from HCC-based risk adjustment to AI-inferred scores.

Here's the structural problem: most legacy platforms were built for batch processing. Claims come in. Reports go out. The gap between identification and intervention is measured in weeks or months, not hours. For risk adjustment, that means HCC identification is claims-based and retrospective. Chart chase workflows are manual. Recapture rates hover around the industry average of ~65%. For quality, HEDIS measurement happens after the fact, and Stars prediction relies on historical trend analysis rather than current-year trajectory modeling.

None of this is a secret. It's the architecture most legacy vendors were designed around. The question is whether that architecture matches where CMS, and the market, is heading.

What "Measure and Improve" Actually Means in Practice

The distinction between measuring performance and improving performance sounds abstract until you put dollar signs on it. So let's do that.

Risk Adjustment: For a 200,000-member MA plan with an average RAF of 1.0, closing a 10% recapture gap on missed HCCs represents $20–35M in incremental revenue. The difference between a retrospective, claims-based approach and a prospective, clinical-plus-claims approach isn't a feature preference — it's the difference between capturing that revenue or watching it walk out the door.

Star Ratings: A 0.5 Star improvement for a 300,000-member plan can mean $30–60M in annual quality bonus differential. The gap between measuring HEDIS performance after the measurement year and intervening during the measurement year is the gap between knowing you missed the bonus and earning it.

RADV Audit Defense: With sample sizes expanding from 35 to 200 records, the financial exposure per audit has multiplied. A plan that can't defend its HCC coding at the member level — not just the population level — faces potential recoupment in the tens of millions per audit.

Legacy analytics platforms excel at quantifying these gaps. They can tell you exactly how much revenue you're missing, how many Stars you're leaving on the table, and which members represent your highest RADV risk. What they can't do is close the gaps they identify.

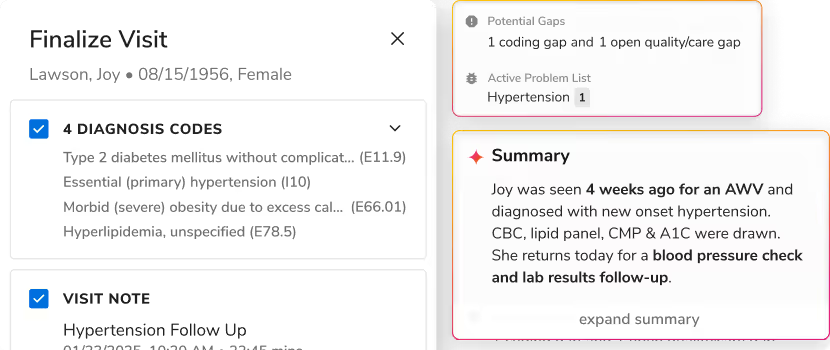

The Galaxy Difference: From Identification to Intervention

Innovaccer's Galaxy platform represents a fundamentally different architecture. Instead of measuring performance after the fact, Galaxy embeds AI directly into the workflows that determine performance. The platform doesn't just identify a missed HCC — it surfaces the clinical evidence needed to support it, routes it to the right coder, and tracks it through to capture. It doesn't just flag a HEDIS gap — it triggers the member outreach, schedules the appointment, and confirms the service completion.

This isn't a reporting upgrade. It's a workflow transformation. Galaxy operates on the same unified data foundation that powers Innovaccer's provider-side solutions, meaning payer teams have access to clinical data, not just claims data. For risk adjustment, that means HCC identification happens at the point of care, not months later during chart review. For quality, it means HEDIS gaps are identified and addressed in real time, not discovered during annual reconciliation.

The results speak for themselves: Galaxy customers report recapture rates above 85% compared to the industry average of 65%. For a 200,000-member plan, that 20-percentage-point difference represents $12–20M in incremental revenue annually. On the quality side, Galaxy-powered plans have achieved Star Rating improvements of 0.3 to 0.7 stars within a single measurement year — improvements that translate directly to quality bonus payments and member retention.

RADV Audit Preparedness: The New Competitive Advantage

With CMS expanding RADV audits to every eligible MA contract and increasing sample sizes to 200 records, audit preparedness has become a core operational competency. Legacy platforms approach RADV preparation as a compliance exercise: identify high-risk members, pull charts, hope the documentation supports the codes.

Galaxy approaches RADV as a data integrity exercise. Because the platform captures clinical evidence at the point of care, not just during annual chart review, Galaxy customers maintain a real-time audit trail for every HCC. When CMS selects members for audit, the supporting documentation is already organized, validated, and defensible. The difference between reactive chart chase and proactive documentation integrity is the difference between surviving a RADV audit and thriving through it.

The Strategic Question: Reporting or Revenue?

For payer executives evaluating their analytics platform, the question is no longer whether the vendor can measure performance accurately. Most established vendors can do that. The question is whether measurement alone is sufficient in a regulatory environment that rewards intervention speed over reporting precision.

CMS is not slowing down. RADV audits will continue expanding. Star Ratings methodology will continue evolving. The Medicare Advantage market will continue consolidating around plans that can operate at regulatory speed, not reporting speed.

The payers that thrive in this environment will be those running on platforms built for intervention, not just identification. Platforms that close gaps, not just measure them. Platforms that turn analytics into action.

Ready to move beyond measurement? See how Galaxy transforms payer analytics from reporting to revenue capture. Schedule a Galaxy demonstration with our payer solutions team.

.svg)

.png)

.png)

.avif)

.png)

.png)

.png)

.svg)

.svg)

.svg)