The Definitive Guide to Evaluating Agentic AI Solutions for Healthcare Enterprises

The surge of agentic AI in healthcare represents a fundamental shift, from passive automation to active, context-aware intelligence that can perceive, reason, learn, and act. As health systems face mounting clinical, financial, and operational complexity, evaluating agentic AI platforms requires a disciplined, domain-specific approach. This guide outlines how to identify the right workflows, data strategy, compliance measures, and vendor capabilities to ensure safe, explainable, and enterprise-ready deployment.

Understanding Agentic AI in Healthcare

Agentic AI in healthcare refers to intelligent AI systems that perceive, reason, act, and learn—allowing them to autonomously complete multi-step tasks while adapting to dynamic environments. In contrast to conventional AI, which primarily analyzes or classifies data, agentic AI operates in real time, responding to context and modifying its actions based on continuous feedback.

Agentic AI refers to systems that combine perception, reasoning, action, and learning capabilities to autonomously complete multi-step healthcare tasks while adapting to new situations.

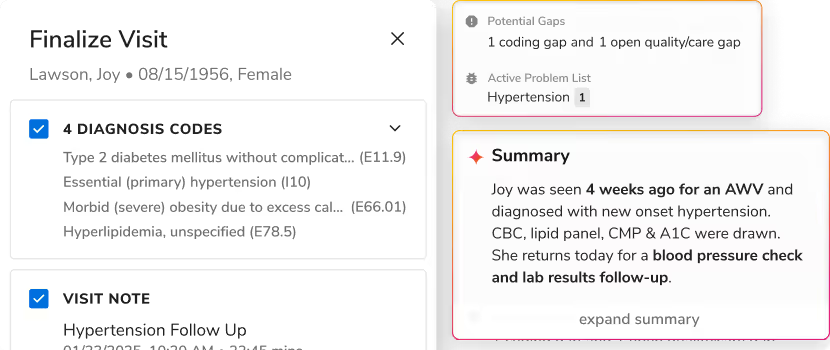

This adaptive capability makes agentic AI particularly suited for healthcare workflows such as patient triage, documentation assistance, scheduling optimization, or care coordination. For healthcare leaders, the promise lies not only in efficiency but in enabling more human-centered care through intelligent augmentation.

Identifying High-Value Healthcare Workflows for Agentic AI

Healthcare organizations should focus first on workflows that combine high operational burden with measurable ROI. Areas like patient access, prior authorization, documentation, and revenue cycle management are natural starting points.

| Workflow Domain | Sample Use Case | Impact Potential |

|---|---|---|

| Patient Access | Automated intake and eligibility verification | Reduced administrative workload |

| Clinical Documentation | Pre-populating EHR fields | Improved clinician efficiency |

| Patient Communication | Call summarization and triage | Enhanced service experience |

| Operations | Real-time volume forecasting | Optimized staffing and throughput |

Smart adoption begins with small, well-defined pilots. Limiting scope—such as a single department or workflow—helps validate early outcomes, reflecting a proven strategy for safe, measurable transformation. Platforms like Innovaccer’s data and AI solutions enable incremental scale through unified data foundations and governed workflows.

Evaluating Data Integration and Interoperability

High-performing agentic AI depends on complete, high-quality, and connected data. Any solution should integrate seamlessly with core systems—EHRs, labs, claims, and CRM platforms—to ensure real-time, contextual inputs.

Fragmented data introduces bias and risks unsafe decisions. Platforms must provide transparent data lineage, automated de-identification, and continuous bias detection to maintain performance integrity.

Interoperability is defined as the seamless, secure exchange and use of data between different health technology systems to support coordinated patient care and operations.

A strong data evaluation checklist includes:

- Verified EHR/claims integration

- Streaming and transformation layers

- PHI handling compliance with explicit safeguards

- Secure data access governance

Innovaccer’s platform exemplifies these requirements through its unified data model and prebuilt interoperability framework for enterprise healthcare systems.

Assessing Platform Architecture and Technology Stack

A resilient agentic AI platform should balance innovation with control. Key architectural features to assess include multi-agent orchestration, modular design, and support for scalable, hybrid deployments.

Cutting-edge implementations leverage frameworks such as Retrieval-Augmented Generation (RAG), LangChain, or AutoGen to ground outputs in SOPs and clinical guidelines—ensuring traceable, predictable behavior. Multi-agent orchestration and strong state management help IT teams monitor and refine agents without disrupting core workflows.

| Capability | What to Look For | Why It Matters |

|---|---|---|

| RAG Anchoring | Context-linked retrieval | Reliable, guideline-based responses |

| Orchestration | Multi-agent coordination | Complex workflow automation |

| Security Tools | Encryption and isolation | PHI protection and HIPAA alignment |

| Deployment Flexibility | Cloud, edge, or hybrid | Adaptability across sites |

Innovaccer’s open, modular architecture supports this balance of flexibility and governance across on-premise and cloud environments.

Ensuring Explainability and Safety in Agentic AI

Trust in AI depends on interpretability. Agentic AI systems must include explainable AI (XAI) modules—such as SHAP or LIME—that make reasoning transparent.

Explainable AI (XAI) refers to methods and technologies that make the decision-making of AI systems transparent and understandable to human users.

To prevent unintended actions, vendors should provide configurable action vocabularies, hard limits, and full audit logs. A safety-first evaluation should confirm:

- Built-in XAI modules and reporting dashboards

- Comprehensive decision trails for audits

- Automated error detection and rollback mechanisms

Innovaccer’s emphasis on transparent governance and validation workflows supports these safety imperatives throughout the AI lifecycle.

Confirming Regulatory Compliance and Liability Frameworks

Regulatory rigor is non-negotiable. Agentic AI platforms must comply with HIPAA for privacy, GDPR for data protection, and, where applicable, FDA Software as a Medical Device (SaMD) guidelines.

FDA’s SaMD guidelines provide a risk-based framework for software that autonomously influences clinical care, ensuring safety and performance monitoring.

Buyers should verify:

- HIPAA/PHI compliance documentation

- Data minimization and de-identification standards

- Explicit liability agreements clarifying accountability

- Written incident and rollback procedures

Innovaccer’s compliance framework adheres to these standards with robust PHI protection, audit controls, and traceability mechanisms.

Implementing Observability and Continuous Performance Monitoring

Agentic AI requires ongoing oversight. Platforms should offer observability dashboards that expose agent task metrics, response times, and performance bottlenecks. Employing methods like “LLM-as-a-Judge” enables automated scoring of AI outputs for accuracy, safety, and compliance.

Observability is the ability to measure and analyze an AI system’s internal states and outputs to manage risks proactively.

A robust monitoring workflow involves:

- Instrumentation and logging of agent activity

- Automated performance scoring and alerts

- Feedback loops for retraining and improvement

This continuous oversight mirrors FDA guidance on post-deployment monitoring of autonomous systems and is a cornerstone of Innovaccer’s trusted AI operations model.

Conducting Pilots and Measuring Clinical and Operational Impact

Before scaling, organizations should conduct structured pilot programs.

Stepwise approach:

- Define clinical and operational KPIs (e.g., time saved, throughput improved).

- Run pilots in controlled environments with strong human oversight.

- Benchmark performance data and safety outcomes before expansion.

Key metrics include:

- Workflow automation percentage

- Turnaround time and error reduction

- Human correction rate

Iterative refinement based on pilot learnings ensures sustainable value and trust. Innovaccer supports this iterative process through measurable outcomes tracking and scalable pilot frameworks.

Best Practices for Governance and Risk Management

Enterprise deployment calls for cross-functional governance. Leading organizations establish governance councils that unite clinicians, compliance officers, data scientists, and IT leaders.

Recommended practices include:

- Defining strict autonomy boundaries with human-in-the-loop checkpoints

- Maintaining clear rollback protocols for any malfunction

- Conducting regular risk audits and policy reviews

- Tracking compliance metrics and escalation processes

This governance ecosystem protects against drift, bias, and liability exposure. Innovaccer’s integrated governance capabilities help organizations operationalize these best practices with confidence.

Selecting the Right Agentic AI Vendor for Healthcare Enterprises

Selecting the right vendor starts with alignment to healthcare realities—not generic AI retrofits. The ideal partner combines domain experience with proven data security, interoperability, and pilot support.

A vendor evaluation framework should assess:

| Vendor Criterion | Key Indicator |

|---|---|

| Healthcare Use Cases | Documented real-world outcomes |

| Privacy Controls | Configurable data governance |

| Human Oversight Design | Built-in human-in-the-loop modules |

| Implementation Support | White-glove onboarding and monitoring |

Innovaccer, with its healthcare-native cloud and AI architecture, provides these capabilities through unified data integration, interoperability, and governance frameworks designed specifically for health enterprises. Above all, evaluation should proceed from workflow needs—not product claims. Reference checks, documentation review, and integration testing are essential for due diligence.

Frequently Asked Questions

What is agentic AI and how does it differ from standard AI in healthcare?

Agentic AI autonomously perceives, reasons, acts, and learns across workflows. Unlike static AI tools, it manages adaptive, multi-step processes that evolve with clinical or operational contexts.

How does agentic AI improve healthcare workflows without replacing human judgment?

It automates repetitive, rule-based tasks and delivers real-time recommendations, allowing clinicians to focus on complex decisions. Platforms like Innovaccer’s reinforce human oversight at every step.

What are the key safety measures to look for in an agentic AI platform?

Look for explainability features, strict action boundaries, transparent audit trails, and built-in human oversight for decisive control.

How can healthcare organizations ensure compliance when deploying agentic AI?

Choose healthcare-specific platforms that align with HIPAA, FDA, and GDPR standards and include secure PHI handling with clear monitoring protocols. Innovaccer’s compliance model is purpose-built for these needs.

What are practical use cases of agentic AI in healthcare operations?

Typical examples include automating documentation, accelerating prior authorizations, triaging patient communications, and forecasting volumes to optimize resources—all supported effectively by platforms such as Innovaccer’s.

.svg)

.png)

.png)

.avif)

.png)

.png)

.png)

.svg)

.svg)

.svg)